Wyndham Sellers

DIRECTOR OF ENGINEERING

The results of the ways we go about delivering on our mission motivates me every day to do my best and deliver quality products and services. We significantly improve community health and safety, which helps me meet my life’s mission.

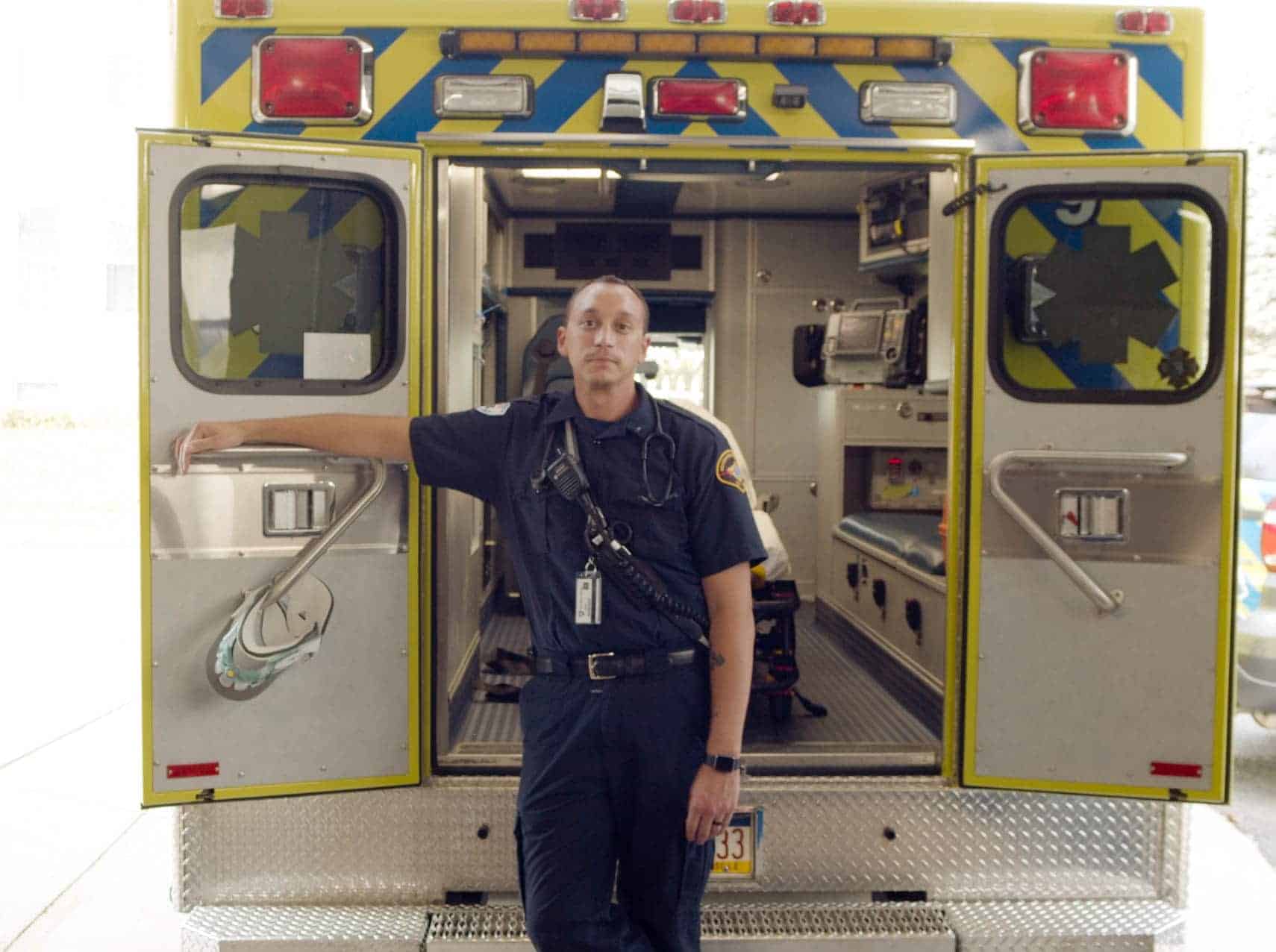

Dale Mount

MS Chief, McMinnville FD

There’s fingerprints of paramedics in ESO…You can see that it’s written from a medic

perspective.

What We Do

Since 2004, we’ve been pioneers in creating innovative, user-friendly software to meet the changing needs of today’s EMS agencies, fire departments, hospitals, and state EMS offices. We are the largest software and data solutions provider to EMS agencies and fire departments…and we’re just getting started.

ESO News

Read the latest press releases and informative articles.